Tesseract OCR Tutorial for iOS

In this tutorial, you’ll learn how to read and manipulate text extracted from images using OCR by Tesseract. By Lyndsey Scott.

Sign up/Sign in

With a free Kodeco account you can download source code, track your progress, bookmark, personalise your learner profile and more!

Create accountAlready a member of Kodeco? Sign in

Sign up/Sign in

With a free Kodeco account you can download source code, track your progress, bookmark, personalise your learner profile and more!

Create accountAlready a member of Kodeco? Sign in

Sign up/Sign in

With a free Kodeco account you can download source code, track your progress, bookmark, personalise your learner profile and more!

Create accountAlready a member of Kodeco? Sign in

Contents

Tesseract OCR Tutorial for iOS

25 mins

- Getting Started

- Tesseract’s Limitations

- Adding the Tesseract Framework

- How Tesseract OCR Works

- Adding Trained Data

- Loading the Image

- Implementing Tesseract OCR

- Processing Your First Image

- Scaling Images While Preserving Aspect Ratio

- Improving OCR Accuracy

- Improving Image Quality

- Where to Go From Here?

We at raywenderlich.com have figured out a sure-fire way to fulfill your true heart’s desire — and you’re about to build the app to make it happen with the help of Optical Character Recognition (OCR).

OCR is the process of electronically extracting text from images. You’ve undoubtedly seen it before — it’s widely used to process everything from scanned documents, to the handwritten scribbles on your tablet PC, to the Word Lens technology in the GoogleTranslate app.

In this tutorial, you’ll learn how to use Tesseract, an open-source OCR engine maintained by Google, to grab text from a love poem and make it your own. Get ready to impress!

U + OCR = LUV

Getting Started

Download the materials for this tutorial by clicking the Download Materials button at the top or bottom of this page, then extract the folder to a convenient location.

The Love In A Snap directory contains three others:

- Love In A Snap Starter: The starter project for this tutorial.

- Love In A Snap Final: The final project.

- Resources: The image you’ll process with OCR and a directory containing the Tesseract language data.

Open Love In A Snap Starter/Love In A Snap.xcodeproj in Xcode, then build and run the starter app. Click around a bit to get a feel for the UI.

Back in Xcode, take a look at ViewController.swift. It already contains a few @IBOutlets and empty @IBAction methods that link the view controller to its pre-made Main.storyboard interface. It also contains performImageRecognition(_:) where Tesseract will eventually do its work.

Scroll farther down the page and you’ll see:

// 1

// MARK: - UINavigationControllerDelegate

extension ViewController: UINavigationControllerDelegate {

}

// 2

// MARK: - UIImagePickerControllerDelegate

extension ViewController: UIImagePickerControllerDelegate {

// 3

func imagePickerController(_ picker: UIImagePickerController,

didFinishPickingMediaWithInfo info: [UIImagePickerController.InfoKey : Any]) {

// TODO: Add more code here...

}

}

- The

UIImagePickerViewControllerthat you’ll eventually add to facilitate image loading requires theUINavigationControllerDelegateto access the image picker controller’s delegate functions. - The image picker also requires

UIImagePickerControllerDelegateto access the image picker controller’s delegate functions. - The

imagePickerController(_:didFinishPickingMediaWithInfo:)delegate function will return the selected image.

Now, it’s your turn to take the reins and bring this app to life!

Tesseract’s Limitations

Tesseract OCR is quite powerful, but does have the following limitations:

- Unlike some OCR engines — like those used by the U.S. Postal Service to sort mail — Tesseract isn’t trained to recognize handwriting, and it’s limited to about 100 fonts in total.

- Tesseract requires a bit of preprocessing to improve the OCR results: Images need to be scaled appropriately, have as much image contrast as possible, and the text must be horizontally aligned.

- Finally, Tesseract OCR only works on Linux, Windows and Mac OS X.

Wait. WHAT?

Uh oh! How are you going to use this in iOS? Nexor Technology has created a compatible Swift wrapper for Tesseract OCR.

Adding the Tesseract Framework

First, you’ll have to install Tesseract OCR iOS via CocoaPods, a widely used dependency manager for iOS projects.

If you haven’t already installed CocoaPods on your computer, open Terminal, then execute the following command:

sudo gem install cocoapods

Enter your computer’s password when requested to complete the CocoaPods installation.

Next, cd into the Love In A Snap starter project folder. For example, if you’ve added Love In A Snap to your desktop, you can enter:

cd ~/Desktop/"Love In A Snap/Love In A Snap Starter"

Next, enter:

pod init

This creates a Podfile for your project.

Replace the contents of Podfile with:

platform :ios, '12.1'

target 'Love In A Snap' do

use_frameworks!

pod 'TesseractOCRiOS'

end

This tells CocoaPods that you want to include TesseractOCRiOS as a dependency for your project.

Back in Terminal, enter:

pod install

This installs the pod into your project.

As the terminal output instructs, “Please close any current Xcode sessions and use `Love In A Snap.xcworkspace` for this project from now on.” Open Love In A Snap.xcworkspace in Xcode.

How Tesseract OCR Works

Generally speaking, OCR uses artificial intelligence to find and recognize text in images.

Some OCR engines rely on a type of artificial intelligence called machine learning. Machine learning allows a system to learn from and adapt to data by identifying and predicting patterns.

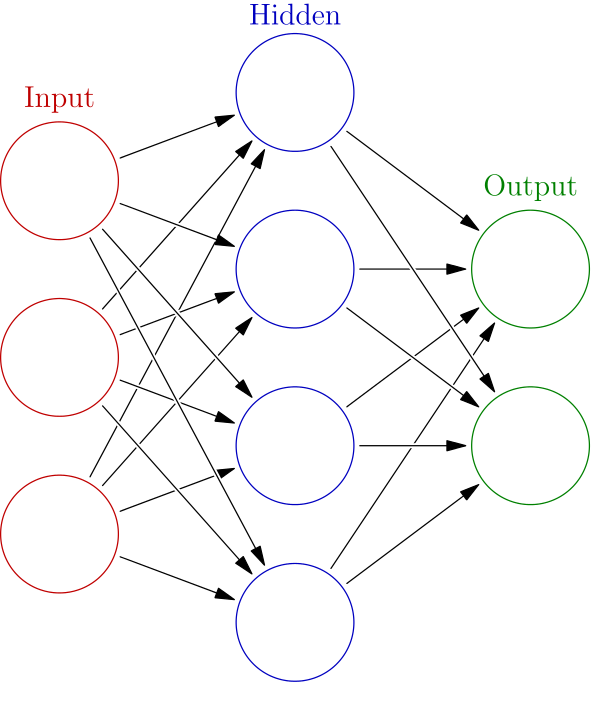

The Tesseract OCR iOS engine uses a specific type of machine-learning model called a neural network.

Neural networks are loosely modeled after those in the human brain. Our brains contain about 86 billion connected neurons grouped into various networks that are capable of learning specific functions through repetition. Similarly, on a much simpler scale, an artificial neural network takes in a diverse set of sample inputs and produces increasingly accurate outputs by learning from both its successes and failures over time. These sample inputs are called “training data.”

While educating a system, this training data:

- Enters through a neural network’s input nodes.

- Travels through inter-nodal connections called “edges,” each weighted with the perceived probability that the input should travel down that path.

- Passes through one or more layers of “hidden” (i.e., internal) nodes, which process the data using a pre-determined heuristic.

- Returns through the output node with a predicted result.

Then that output is compared to the desired output and the edge weights are adjusted accordingly so that subsequent training data passed into the neural network returns increasingly accurate results.

Schema of a neural network. Source: Wikipedia.

Schema of a neural network. Source: Wikipedia.

Tesseract looks for patterns in pixels, letters, words and sentences. Tesseract uses a two-pass approach called adaptive recognition. It takes one pass over the data to recognize characters, then takes a second pass to fill in any letters it was unsure about with letters that most likely fit the given word or sentence context.

Adding Trained Data

In order to better hone its predictions within the limits of a given language, Tesseract requires language-specific training data to perform its OCR.

Navigate to Love In A Snap/Resources in Finder. The tessdata folder contains a bunch of English and French training files. The love poem you’ll process during this tutorial is mainly in English, but also contains a bit of French. Très romantique!

Your poem vil impress vith French! Ze language ov love! *Haugh* *Haugh* *Haugh*

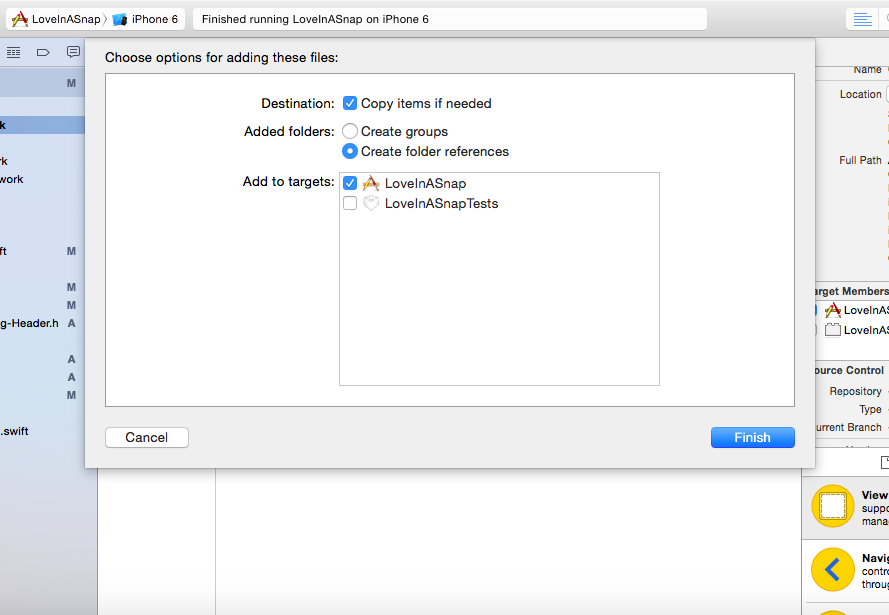

Now, you’ll add tessdata to your project. Tesseract OCR iOS requires you to add tessdata as a referenced folder.

- Drag the tessdata folder from Finder to the Love In A Snap folder in Xcode’s left-hand Project navigator.

- Select Copy items if needed.

- Set the Added Folders option to Create folder references.

- Confirm that the target is selected before clicking Finish.

Add tessdata as a referenced folder

You should now see a blue tessdata folder in the navigator. The blue color indicates that the folder is referenced rather than an Xcode group.

Now that you’ve added the Tesseract framework and language data, it’s time to get started with the fun coding stuff!