Platform-Specific Code With Flutter Method Channel: Getting Started

Learn how to communicate with some platform-specific code with Flutter method channels and extend the functionality of the Flutter application. By Wilberforce Uwadiegwu.

Sign up/Sign in

With a free Kodeco account you can download source code, track your progress, bookmark, personalise your learner profile and more!

Create accountAlready a member of Kodeco? Sign in

Sign up/Sign in

With a free Kodeco account you can download source code, track your progress, bookmark, personalise your learner profile and more!

Create accountAlready a member of Kodeco? Sign in

Sign up/Sign in

With a free Kodeco account you can download source code, track your progress, bookmark, personalise your learner profile and more!

Create accountAlready a member of Kodeco? Sign in

Contents

Platform-Specific Code With Flutter Method Channel: Getting Started

30 mins

- Getting Started

- Understanding the Method Channel

- Setting up Method Channel on iOS With Swift

- Understanding PhotoKit

- Requesting Permissions on iOS

- Fetching all Photos From iOS

- Reading Image Data From iOS

- Setting up the Method Channel on Flutter

- Building a Custom Image Provider in Flutter

- Rendering Images From the Host Device

- Rendering Selected Images

- Setting up Method Channel on Android with Kotlin

- Understanding Android’s Media API

- Requesting User Permissions on Android

- Fetching all Images From Android

- Reading Image Bytes on Android

- Consuming Method Calls From Host Platforms in Flutter

- Where to Go From Here?

The concept of cross-platform messaging was introduced in an earlier tuorial. That tutorial briefly highlighted the difference between the Event Channel and Method Channel. It went into detail on how to stream data from the host platform to your Dart code. This tutorial focuses on cross-platform messaging with the Method Channel. Here, you’ll build Photos Keyboard, an app that replicates the behavior of Slack’s image picker. You’ll learn how to:

- Invoke methods on host platforms and listen for method calls.

- Query images from the user’s photo library.

- Build a custom image provider.

Getting Started

Download the project by clicking Download Materials at the top or bottom of this tutorial. Unzip the file and open the starter folder with the latest version of Android Studio or Visual Studio Code. The project has two directories: common and widgets. The common directory contains code used in multiple files, and the widget directory houses widget files.

Open pubspec.yaml and click the Pub get tab that appears in your IDE. Open lib/main.dart and run the project to see this on your target emulator or device:

This tutorial is divided into three main sections.

- The first section walks you through setting up Method Channel on iOS, listening for method calls, querying the OS for photos and returning the results to Flutter.

- In the second section, you’ll complete the UI to render the images and invoke methods on the host platform.

- Finally, using Kotlin on Android, the last section guides you in setting up and listening for method calls on the Method Channel, retrieving images from the gallery and sending the decoded image bytes back to Flutter for rendering.

Understanding the Method Channel

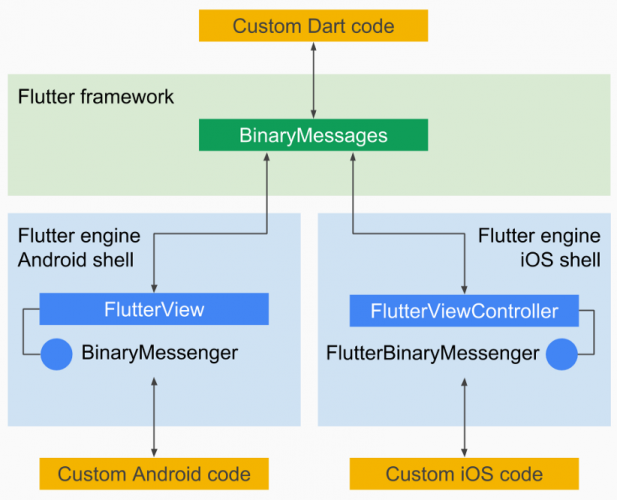

The Method Channel stems from binary messaging and the platform channel. The previously mentioned tutorial on Event Channel provided an overview of these APIs and explained how the Method Channel and Event Channel are types of platform channels. Here’s an illustration of the Platform Channel stack:

The stack of Platform Channel

The stack of Platform Channel

The Method Channel, unlike the Event Channel, supports a bidirectional invocation of methods. An interesting thing to keep in mind here is that the method invocation isn’t technically an “invocation”, as the API doesn’t call the function for you. As you’ll see later, when the method is received, you can check the method invoked and call the function yourself. Mikkel Ravn wrote a detailed article on Platform Channels and the design tradeoffs.

Setting up Method Channel on iOS With Swift

To receive method calls on iOS, you need the channel’s name and a closure. The name is like an ID for the channel and must be the same for iOS, Flutter and Android. The Flutter Platform Engine calls the closure when you invoke a method on the Method Channel from the Dart side. In this closure, you retrieve the name and parameters of the invoked method and consume it.

Start by opening Runner.xcworkspace in the starter/ios directory with Xcode. Then, declare the following variables above application() inside the AppDelegate class in AppDelegate.swift:

// 1

private var flutterResult: FlutterResult? = nil

// 2

private var fetchLimit: Int = 0

// 3

private var fetchResult: PHFetchResult<PHAsset>? = nil

The use case for the variables above is as follows:

- Handles communication back to Flutter.

- Maximum number of photos to fetch.

- Reference to the photo query result.

Still in AppDelegate.swift, add this import statement below the other import statements:

import Photos

Next, add the following code above GeneratedPluginRegistrant.register(with: self) inside application():

let controller = (window?.rootViewController as! FlutterViewController)

// 1

let methodChannel =

FlutterMethodChannel(name: "com.raywenderlich.photos_keyboard", binaryMessenger: controller.binaryMessenger)

// 2

methodChannel

.setMethodCallHandler({ [weak self](call: FlutterMethodCall, result: @escaping FlutterResult) -> Void in

switch call.method {

case "getPhotos":

// 3

self?.fetchLimit = call.arguments as! Int

self?.flutterResult = result

self?.getPhotos()

default:

// 4

result(FlutterMethodNotImplemented)

}

})

Here’s what the code above does:

- Initializes the channel with the name

com.raywenderlich.photos_keyboard. - Listens for incoming function calls.

- Loosely translated, this means “call

getPhotos()when Dart side invokesgetPhotos“. - Handles unknown methods.

Understanding PhotoKit

PhotoKit comprises APIs that sit between your app and local and iCloud media. These APIs essentially control access to media in the Photos app, dictating how your app reads and writes from them. Throughout this tutorial, you’ll interact with some of these APIs, like PHPhotoLibrary, PHAsset and PHImageManager.

Requesting Permissions on iOS

On iOS, sensitive user data like the photos library is walled behind protected APIs like PhotoKit. You add a key-value pair to Info.plist to declare your app’s intention of accessing that data. The key is the ID for that data and the value is the reason your app needs the data.

Now, open Info.plist. Hover on the rows of keys on the left, and click any of the + icons that appear. Start typing “Privacy” and select Privacy – Photo Library Usage Description from the drop-down. Then, paste “Photos Keyboard needs access to photos to make them available for selection” for the value column for that same row.

Next, request permission from the user when Flutter calls getPhotos. Write this function below application():

private func getPhotos() {

PHPhotoLibrary.requestAuthorization { (status) in

if (status == .authorized) {

} else {

let error =

FlutterError

.init(code: "0", message: "Not authorized", details: status)

self.flutterResult?(error)

}

}

}

PHPhotoLibrary.requestAuthorization() displays a permission prompt to the user and status is the the reponse of the user.

Fetching all Photos From iOS

Now that you have the user’s blessing to access their photos, read the photos and return the result to Flutter. Add the following code inside the if branch of the previous statement:

let options = PHFetchOptions()

options.fetchLimit = self.fetchLimit

options.sortDescriptors =

[NSSortDescriptor(key: "creationDate", ascending: false)]

self.fetchResult = PHAsset.fetchAssets(with: .image, options: options)

var results: [String] = []

self.fetchResult?.enumerateObjects { asset, count, stop in

results.append(asset.localIdentifier)

}

self.flutterResult?(results)

This fetches the images, sorting them by creation date, and picks out the IDs of the results.